Last updated: May 9, 2026 | Reading time: 10 minutes

Introduction – The Hidden AI Epidemic

Your employees are using AI that IT never approved. They paste customer data into ChatGPT. They ask Claude to summarize confidential board meeting notes. They generate code using Gemini and copy it directly into production systems. And most of the time, you have no idea.

This phenomenon is called Shadow AI – the use of artificial intelligence tools, models, and agents without explicit authorization from an organization’s IT or security team. It mirrors the “Shadow IT” wave of the 2010s, where employees adopted cloud apps (Dropbox, Slack, Trello) behind IT’s back. But Shadow AI is far more dangerous.

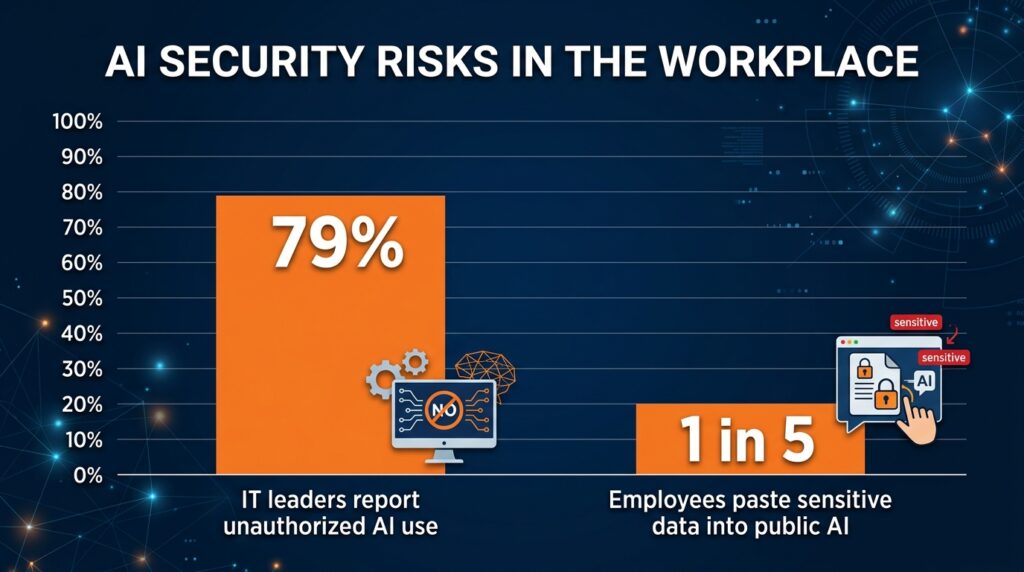

According to a 2026 survey by Netskope, 79% of IT leaders report that employees have deployed unauthorized AI agents or tools within the last 12 months. The average employee uses three or more unapproved AI tools daily. And nearly one in five have pasted sensitive corporate data into a public AI model.

This article explains why Shadow AI is happening, why it’s a growing security and compliance nightmare, and how companies can regain control without killing innovation.

Quick Summary – What Every Business Leader Needs to Know

| Question | Answer |

|---|---|

| What is Shadow AI? | Use of generative AI tools (ChatGPT, Claude, Gemini, DeepSeek) without IT approval. |

| How widespread is it? | 79% of organizations have detected unauthorized AI use; the real number is likely higher. |

| What’s the biggest risk? | Data leakage – employees pasting confidential information into public AI models that may train on that data. |

| What else can go wrong? | Compliance violations (HIPAA, GDPR, CCPA), biased hiring decisions, insecure code generation, and contractual breaches. |

| Can you stop it? | Not completely, but you can manage and control it with policy, training, and technical controls. |

1. What Is Shadow AI? – The New Shadow IT

In the early 2010s, employees brought their own cloud apps – Dropbox, Evernote, Google Docs – without IT’s knowledge. That was Shadow IT. Today, the same pattern is repeating with AI, but the stakes are much higher.

Shadow AI includes:

- Public chatbots – Employees using ChatGPT, Claude, Gemini, or DeepSeek to draft emails, summarize documents, or answer questions.

- AI coding assistants – GitHub Copilot, Cursor, or Codeium generating code that ends up in proprietary applications.

- Third‑party AI agents – Tools like Perplexity’s PC Agent, OpenAI’s Operator, or Anthropic’s Computer Use being deployed without review.

- Internal models – Data scientists spinning up their own models on unapproved cloud infrastructure (AWS, GCP, Azure accounts).

The common thread: IT and security teams have no visibility, no control, and no policy.

2. Why Is Shadow AI Happening? – The Employee Perspective

Employees aren’t trying to be malicious. They are trying to be productive. And for many tasks, AI tools are dramatically faster than doing the work manually.

Reasons employees turn to unauthorized AI:

| Reason | Example |

|---|---|

| Speed | Drafting a 10‑page report in 5 minutes instead of 5 hours. |

| Lack of official tools | The company hasn’t provided an approved AI assistant, so they find their own. |

| Ease of use | Signing up for ChatGPT takes 30 seconds with a personal email. |

| Perceived low risk | “It’s just a chatbot – what could go wrong?” |

| Competitive pressure | “Everyone else is using AI; if I don’t, I’ll fall behind.” |

Most employees don’t realize that their innocent queries can expose trade secrets, customer data, or intellectual property. A marketing manager pasting a draft ad campaign into ChatGPT seems harmless – until that campaign becomes the training data for a competitor’s AI.

3. Why Shadow AI Is Dangerous – The Risks

The risks of Shadow AI fall into four categories. Each can cause serious financial and reputational damage.

A. Data Leakage (The #1 Risk)

When employees paste sensitive information into a public AI model, that data may be:

- Stored on the provider’s servers.

- Used to train future versions of the model (unless the provider offers opt‑out – and most employees never check).

- Accessible to the provider’s employees or contractors.

Real example: In 2025, a Samsung employee pasted confidential source code into ChatGPT to debug it. The code later appeared in a public training set. Samsung banned ChatGPT internally, but the damage was done.

B. Compliance Violations

Regulations like GDPR, HIPAA, CCPA, and FINRA restrict how personal, health, and financial data can be processed. Sending patient names, credit card numbers, or EU citizen data to an unapproved AI provider can trigger fines of up to €20 million or 4% of global revenue.

C. Insecure Code Generation

AI coding assistants can generate code that looks correct but contains security vulnerabilities (SQL injection, hardcoded credentials, improper input validation). Developers who blindly accept AI‑generated code introduce backdoors into production systems.

D. Legal and Contractual Risks

Many vendor contracts prohibit sharing certain types of information with third parties. Employees using ChatGPT to summarize a supplier agreement may violate those terms, triggering breach of contract.

4. The Numbers – How Bad Is It Really?

Recent surveys and industry reports paint a sobering picture:

| Statistic | Source | Implication |

|---|---|---|

| 79% of IT leaders report unauthorized AI use in their organization | Netskope 2026 | Almost every company has Shadow AI. |

| 20% of employees have pasted sensitive data into public AI | Netskope 2026 | One in five is a data leak waiting to happen. |

| 60% of employees use AI for work without informing their employer | Salesforce 2025 | Most usage is hidden. |

| 85% of IT leaders say they are “very” or “extremely” concerned about Shadow AI | Netskope 2026 | It keeps security teams up at night. |

| 0% of organizations have full visibility into all AI use | Vendor estimate | No one has solved this yet. |

5. Case Study – How One Company Lost Trade Secrets to a Public AI

In early 2026, a mid‑sized pharmaceutical company discovered that an employee had pasted the molecular structure of an experimental drug into ChatGPT to “check for similar compounds.” The employee had not disabled training on their account. Two months later, a competitor’s AI‑generated research note referenced the same molecular structure – which had never been published.

The company could not prove the leak originated from ChatGPT, but the timing was unmistakable. The incident cost an estimated $50 million in lost competitive advantage.

This story is not unique. It is happening across every industry: finance, legal, manufacturing, retail, healthcare, and technology.

6. How to Regain Control – A Practical Guide for IT Leaders

You cannot stop employees from using AI. If you try to block every tool, they will find workarounds (personal devices, VPNs, mobile hotspots). Instead, focus on visibility, policy, and safe alternatives.

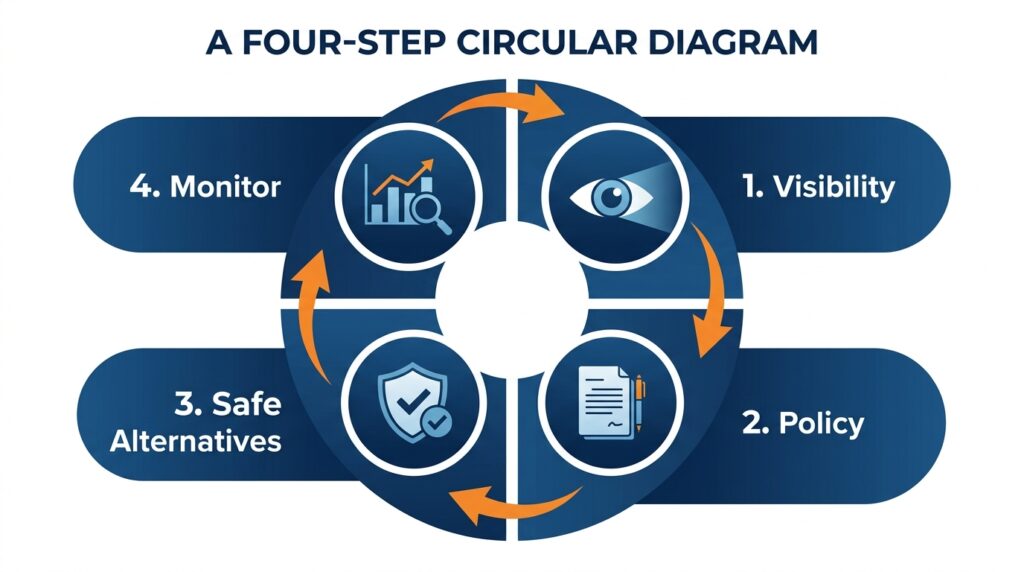

Step 1: Gain Visibility (Detect Shadow AI)

- Use cloud access security brokers (CASBs) – Tools like Netskope, McAfee, or Microsoft Defender can detect traffic to AI providers.

- Monitor DNS logs – Look for domains like openai.com, anthropic.com, deepseek.com, etc.

- Deploy browser extensions – Some security tools can alert when employees paste large amounts of text into AI chat interfaces.

Step 2: Create and Communicate an AI Policy

- Define what is allowed. Which AI tools are approved? What data can be shared?

- Prohibit sharing of sensitive data (PII, trade secrets, financial data, source code).

- Require opt‑out of training – Many providers allow users to disable using their data for model improvement.

- Train employees – Most don’t understand the risks. A 15‑minute training session can dramatically reduce Shadow AI.

Step 3: Provide Safe, Approved Alternatives

- Enterprise AI gateways – Tools like Cloudflare’s AI Gateway or AWS Bedrock allow IT to proxy and inspect AI traffic.

- Corporate‑branded AI instances – Some vendors offer isolated, private instances where training is disabled by default.

- Internal models – For highly sensitive data, companies can run open‑source models (Llama 4, Mistral) entirely within their own cloud.

Step 4: Monitor and Respond

- Set up alerts for unusual AI usage (e.g., 10,000+ tokens pasted in one minute).

- Regularly review AI access logs – Who is using which tools, and for what purpose?

- Have an incident response plan for when (not if) a data leak is discovered.

7. The Future – Why Shadow AI Won’t Disappear

Shadow AI is not a temporary problem. As AI agents become more capable, employees will find even more creative ways to use them without approval. Consider:

- Agentic AI – Employees may spin up autonomous agents that run 24/7, performing tasks that were previously impossible.

- Open‑source models – Anyone can download and run Llama 4 on a laptop, completely invisible to IT.

- Personal devices – Employees can use AI on their phones and transfer results to work computers.

The solution is not a technical silver bullet. It is a cultural shift. Companies must move from “block everything” to “enable safely.” That means investing in AI governance, training, and tools that give employees the productivity gains they want without the security nightmares.

Frequently Asked Questions (FAQ)

Q1: Is Shadow AI really that common?

A: Yes. Netskope’s 2026 survey found that 79% of organizations have detected unauthorized AI use. The real number is almost certainly higher because many instances go undetected.

Q2: What’s the difference between Shadow AI and Shadow IT?

A: Shadow IT involved cloud apps (storage, collaboration) that mostly stored data. Shadow AI involves LLMs that ingest and process data – potentially learning from it. The risk of data leakage is much higher.

Q3: Can I just block ChatGPT at the firewall?

A: You can, but employees will find workarounds (personal devices, VPNs, mobile hotspots). Also, many legitimate business functions now require AI. A complete ban is rarely effective.

Q4: How do I know if my employees are using Shadow AI?

A: Use CASB tools, inspect DNS logs, or deploy browser extensions designed to detect AI traffic. Ask employees directly – many will admit it if the conversation is non‑punitive.

Q5: What should I do if I find a data leak?

A: First, stop the leak (disable the employee’s access to that AI tool). Second, assess what data was exposed. Third, notify legal and compliance. Fourth, use the incident as a training opportunity – not a firing offense.

Q6: Are there any AI tools that are safe to use with sensitive data?

A: Some vendors offer “private” or “air‑gapped” instances where data is not used for training and is stored only within your cloud. AWS Bedrock, Azure OpenAI Service (with data isolation), and Google Vertex AI have enterprise options. Open‑source models (Llama, Mistral) can be run entirely on your own servers.

Q7: How does this connect to your article on AI inference costs?

A: Shadow AI drives up inference costs without IT’s knowledge. Finance teams may see rising cloud bills but have no idea which department is generating them. Visibility into Shadow AI is the first step to cost control.

Q8: What’s the single most important thing I can do tomorrow?

A: Talk to your employees. Announce that you know Shadow AI is happening, that you aren’t going to fire anyone, and that you want to work with them to find safe, approved alternatives. Psychological safety is the foundation of good security.

Conclusion – From Shadow to Light

Shadow AI is not going away. Employees will continue to use AI because it makes them faster, smarter, and more competitive. The question is not whether you can stop it – you cannot. The question is whether you can manage it.

By gaining visibility, creating clear policies, providing safe alternatives, and fostering a culture of trust, you can turn Shadow AI from a hidden threat into a governed asset. The companies that succeed will be those that embrace AI openly – but with eyes wide open to the risks.

References & Further Reading

- Netskope – “Shadow AI Report 2026” (April 2026)

- Salesforce – “Generative AI at Work Survey” (2025)

- Gartner – “How to Govern Shadow AI in the Enterprise” (March 2026)

- The Information – “Samsung Bans ChatGPT After Code Leak” (2025)

- CSO Online – “Why Shadow AI Is the Next Big Security Threat” (January 2026)

- MIT Technology Review – “The Employee AI Revolution No One Is Managing” (February 2026)

If you found this explainer useful, check out our related articles:

👉 Why Google, Microsoft, and Amazon Are Building Their Own AI Chips (6 Reasons)

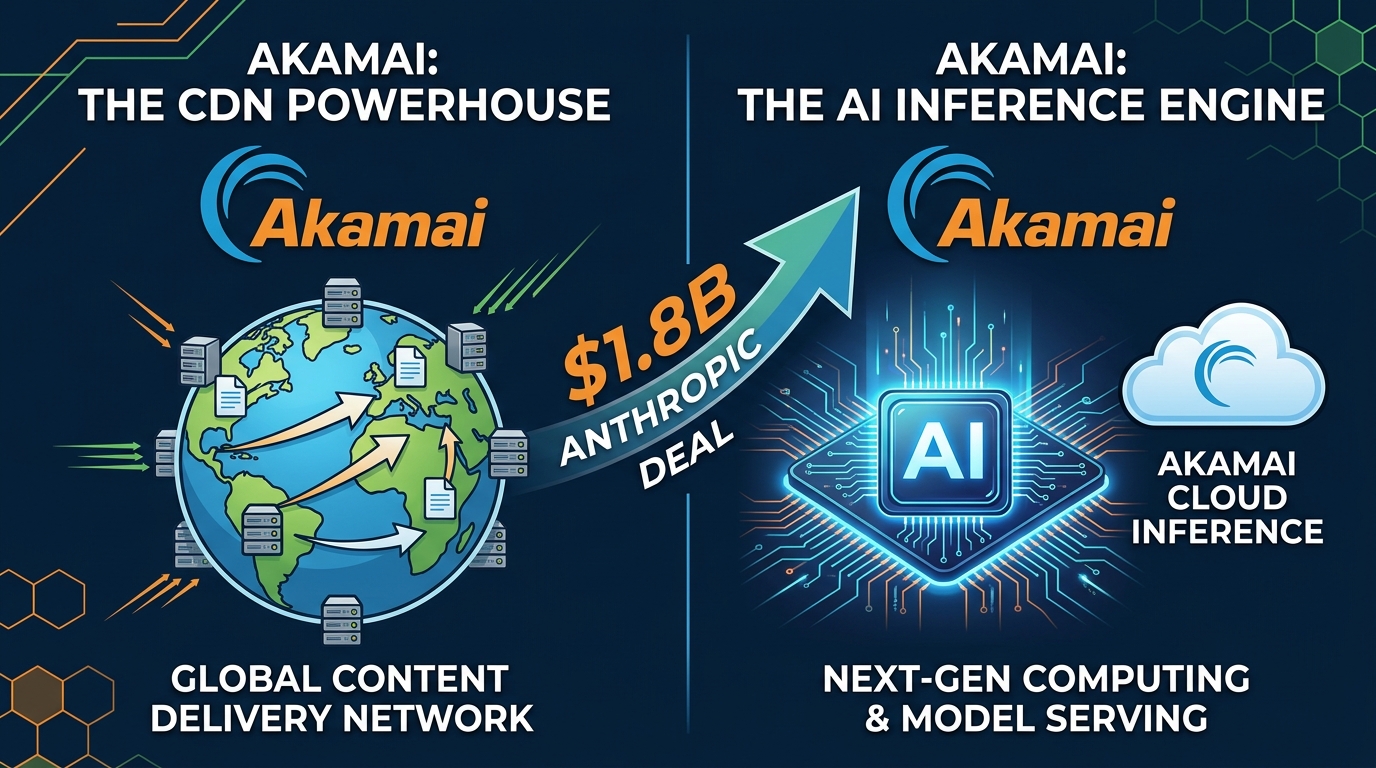

👉 Why Akamai (a CDN Company) Is Winning Billion‑Dollar AI Cloud Deals

👉 Can You Trust Third‑Party AI Models Inside Your iPhone? iOS 27’s Privacy Model, Explained

📬 Subscribe to ExplainThisTech for more “why” breakdowns of the technology shaping our world.

Leave a Reply