Last updated: May 9, 2026 | Reading time: 12 minutes

Introduction – The CDN Giant That Became an AI Cloud Powerhouse

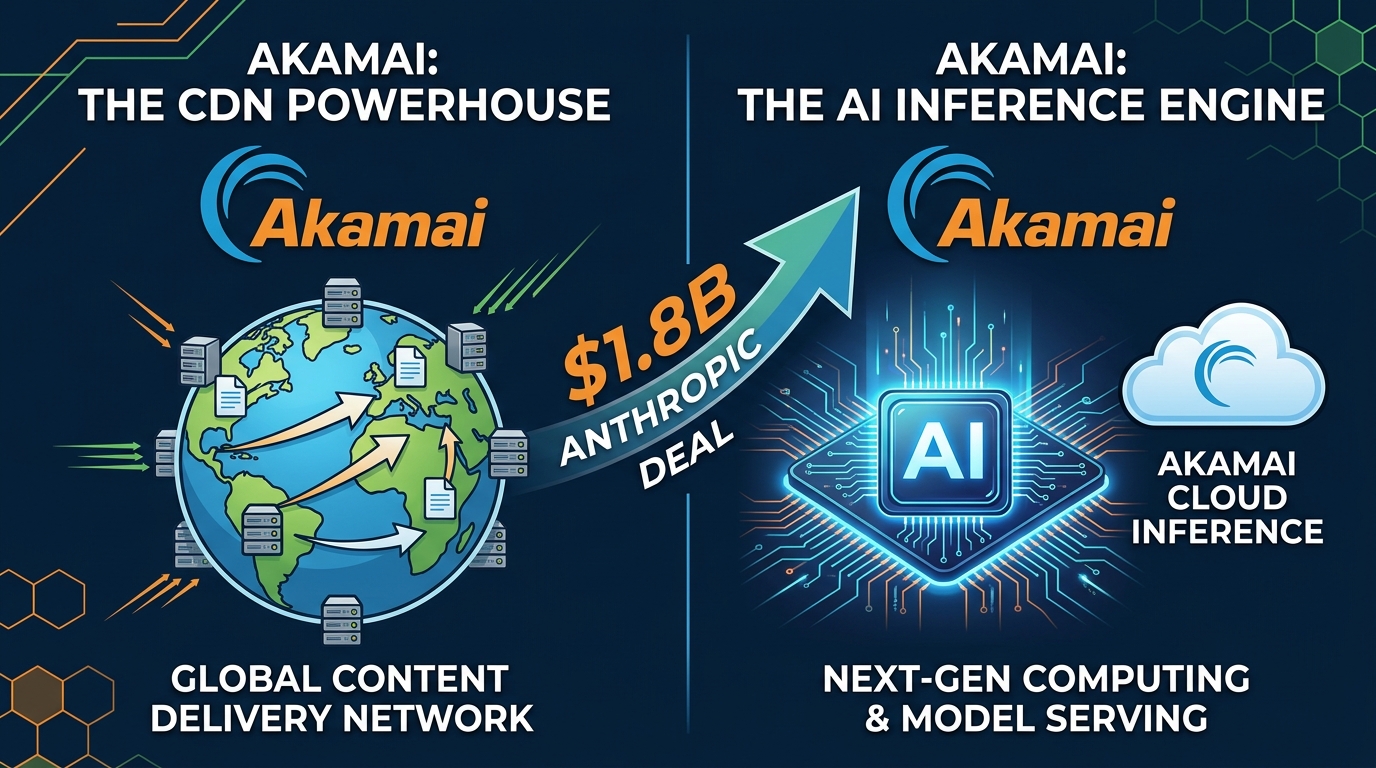

For decades, Akamai Technologies was synonymous with content delivery. The company that helped Netflix stream video and Amazon serve product images was the invisible backbone of the internet. But in 2026, Akamai is no longer just a CDN company.

On May 8, 2026, Anthropic—the 60 billion AI startup behind Claude—signed a seven‑year, 1.8 billion cloud infrastructure deal with Akamai. The contract is the largest in Akamai’s 28‑year history, sending its stock up 27% (its biggest one‑day gain in 22 years).

The deal is remarkable for two reasons:

- Anthropic already had access to massive compute – including Amazon’s 5 GW pipeline, SpaceX’s Colossus 1 supercomputer (220,000+ GPUs), and multi‑billion dollar partnerships with Google and Microsoft.

- Akamai beat out “stiff competition” from both hyperscalers (AWS, Azure, GCP) and specialized neoclouds (CoreWeave, Lambda Labs) to win the contract.

How did a legacy CDN provider transform itself into a credible challenger for AI workloads? And what does this mean for the future of cloud computing?

This article explains the strategic pivot that turned Akamai into an AI cloud player, why Anthropic chose it, and what it signals about the shift from centralized training to distributed inference.

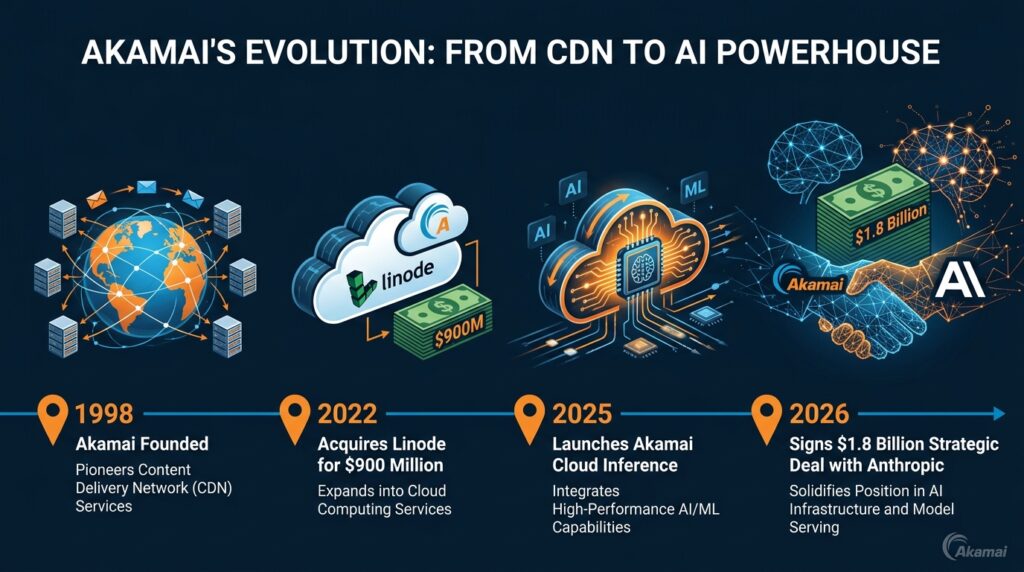

Quick Summary – How Akamai Reinvented Itself

| Year | Milestone | Strategic Significance |

|---|---|---|

| 2022 | Acquires Linode for $900M | Enters cloud compute market, gains data centers and developer tools |

| 2023–2024 | Builds “Akamai Cloud” – unified edge + core cloud platform | Integrates CDN with virtual machines, block storage, Kubernetes |

| March 2025 | Launches Akamai Cloud Inference | Deploys over 4,400 NVIDIA RTX 6000 GPUs across 200+ edge locations |

| May 2026 | Signs $1.8B, 7‑year deal with Anthropic | Largest customer in Akamai history; validates edge‑first AI strategy |

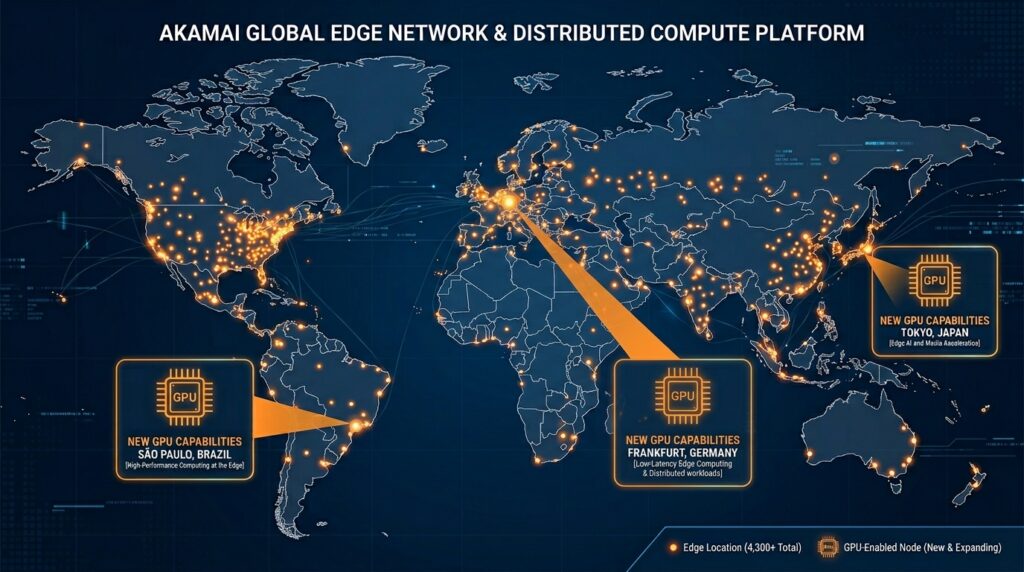

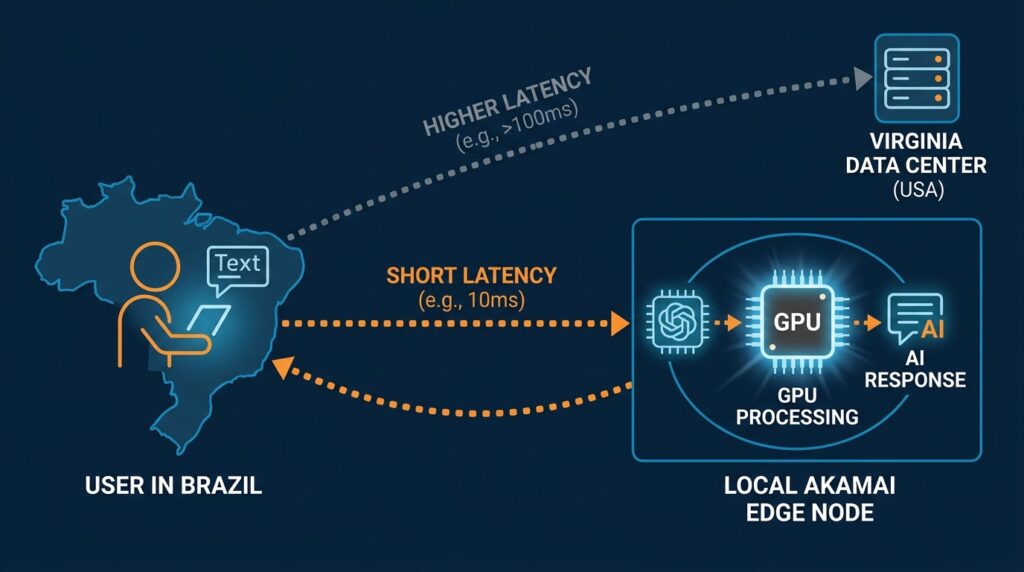

Akamai’s unique selling proposition is distributed inference. Instead of sending every AI request to a centralized hyperscale data center (Virginia, Texas, or Ireland), Akamai runs models on its global edge network – over 4,300 locations in 130+ countries.

For AI workloads that require low latency (real‑time chatbots, voice assistants, autonomous agents) or data residency (compliance, privacy), Akamai’s architecture is not just competitive – it is sometimes superior to AWS or Azure.

1. From CDN to Cloud – The Linode Acquisition

Akamai’s pivot began in 2022 with the $900 million acquisition of Linode, a well‑regarded cloud provider known for simplicity and developer‑friendliness. At the time, the move seemed defensive – Amazon, Microsoft, and Google were eating into CDN margins by bundling delivery with their cloud services.

But Akamai had a vision: merge Linode’s core cloud infrastructure with Akamai’s global edge footprint to create a new kind of distributed cloud.

By 2024, Akamai had integrated:

- Virtual machines and block storage – the basics of any public cloud.

- Kubernetes as a service – for containerized AI workloads.

- Geographically distributed data centers – not just three or four regions, but dozens, piggybacking on Akamai’s existing edge nodes.

The result was Akamai Cloud – a platform that could run general‑purpose compute anywhere, not just in hyperscale “regions.” But the real breakthrough came when Akamai realized that AI inference would be the killer app for edge compute.

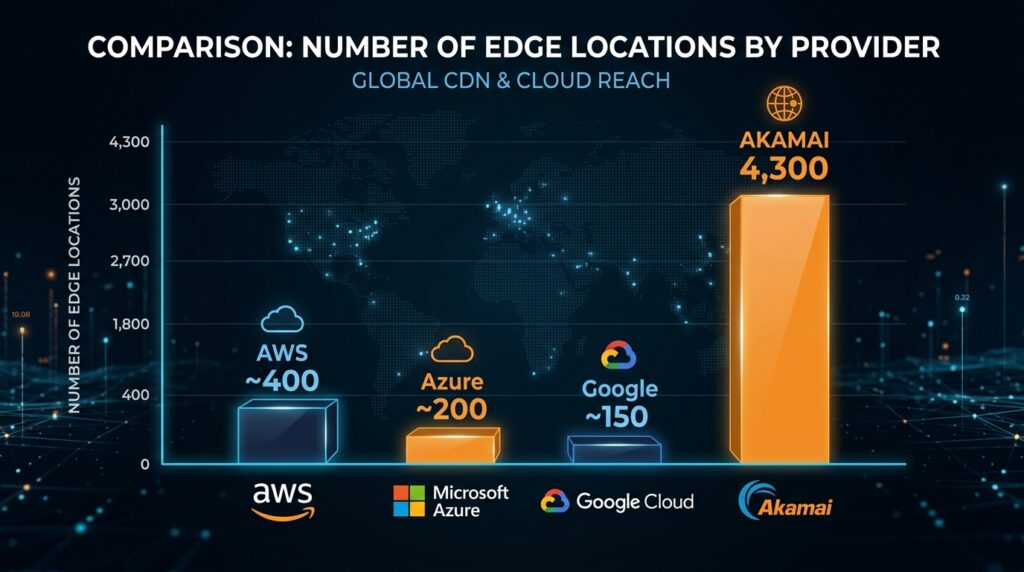

| Cloud Provider | Number of Regions | Edge Locations | Primary Strength |

|---|---|---|---|

| AWS | ~30 | ~400+ CloudFront | Breadth of services |

| Azure | ~60 | ~200+ | Enterprise integration |

| Google Cloud | ~40 | ~150+ | AI/ML tooling |

| Akamai Cloud | ~50 (core) | 4,300+ | Lowest latency edge |

2. Akamai Cloud Inference – Built for the AI Era

In March 2025, Akamai launched Akamai Cloud Inference, a managed service specifically designed to run AI models at the edge. Key features:

- Deployed over 4,400 NVIDIA RTX 6000 GPUs across more than 200 edge locations worldwide.

- Low latency – model inference within milliseconds of the end user.

- Cost efficiency – no need to backhaul data to a central region; processing happens locally.

- Data residency compliance – sensitive data can stay within country borders (critical for EU, China, financial services).

Tom Leighton, Akamai’s CEO, described the vision as moving AI from “isolated factories” to a “unified distributed grid” – analogous to how electricity generation shifted from local power plants to interconnected grids.

The early results were promising. By Q1 2026, Akamai’s cloud revenue had grown 40% year‑over‑year to $95 million. But the company needed a marquee customer to prove its edge‑first model at scale. Anthropic became that customer.

3. Why Anthropic Chose Akamai (and Not AWS/Azure)

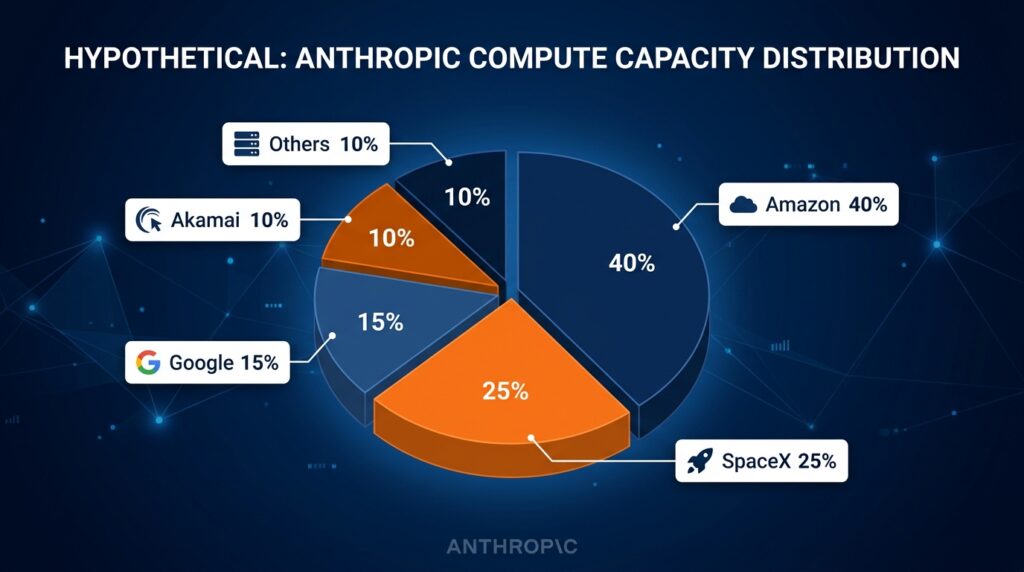

Anthropic already has enormous cloud commitments:

- Amazon: up to 5 GW of capacity as part of a strategic partnership.

- SpaceX: access to the Colossus 1 supercomputer (300 MW, 220,000+ GPUs).

- Google, Microsoft, Broadcom: additional compute partnerships.

So why add Akamai?

A. Inference Latency Is Becoming Critical

Anthropic’s flagship product, Claude, is increasingly used in agentic workflows – AI agents that take actions in real time (browsing, making API calls, controlling software). These agents require sub‑50ms latency, which centralized data centers cannot guarantee for users on other continents.

Akamai’s edge network can place Claude inference within a few milliseconds of almost any user on the planet. For Anthropic, this is not a nice‑to‑have – it is a performance requirement.

B. Diversification Against GPU Supply Constraints

As we explained in our article “Why AI & Cloud Infrastructure Demand Is Outpacing Supply”, the entire industry is facing power, chip, and labor bottlenecks. By adding Akamai as another capacity provider, Anthropic hedges against shortages at any single hyperscaler.

If AWS experiences a transformer delay or power outage in Virginia, Anthropic can still route inference requests to Akamai’s edge nodes on the same continent.

C. Vendor Lock‑in Mitigation

Anthropic’s leadership has publicly stated that it wants to avoid over‑reliance on any single cloud provider. Building on Akamai’s distributed platform gives Anthropic leverage in future negotiations with Amazon, Google, and Microsoft – and ensures it can run Claude even if hyperscaler relationships sour.

4. The “Neocloud” Revolution – Challenging the Hyperscalers

Akamai is not alone. A new generation of “neocloud” providers is emerging, built from the ground up for AI workloads:

| Provider | Specialty | Key Customers | Differentiator |

|---|---|---|---|

| CoreWeave | GPU‑optimized cloud | Microsoft, OpenAI, Stability AI | Massive H100 clusters |

| Crusoe Energy | Stranded power + GPU compute | Microsoft (900 MW Texas facility) | Low‑cost, environmentally efficient |

| Lambda Labs | On‑demand GPU rentals | Research labs, startups | Flexible pricing |

| SpaceX (Colossus) | Dedicated AI supercomputer | Anthropic (exclusive) | 220,000+ GPUs in one location |

| Akamai Cloud Inference | Edge‑native inference | Anthropic | Lowest latency, 4,300 edge locations |

These neoclouds are winning business by offering:

- Better price/performance than hyperscalers (no legacy enterprise overhead).

- Faster access to new GPUs (Nvidia prioritizes them because they build clusters faster).

- Specialized architectures (edge inference, liquid cooling, direct‑to‑chip integration).

Anthropic’s $1.8 billion bet on Akamai is the most visible validation yet that the hyperscaler monopoly on AI cloud is breaking down.

5. What This Means for the Future of AI Cloud

The Anthropic‑Akamai deal is not a one‑off. It signals three lasting shifts:

A. AI Inference Will Be Distributed, Not Centralized

Training will remain concentrated in megafactories (Texas, Iowa, Virginia). But inference – the majority of AI compute by 2026 – will run at the edge, near users. Akamai’s 4,300 locations will become mini AI factories.

B. Enterprises Will Have Real Choice

For years, cloud buyers had only three options: AWS, Azure, or GCP. Now, they have dozens. Neoclouds offer lower costs, better latency, and more specialized services. The era of vendor lock‑in is ending for AI workloads.

C. CDN Companies Are Reinventing Themselves

Cloudflare, Fastly, and others are watching Akamai closely. Cloudflare already announced its own AI inference platform, Workers AI, with models running at the edge. Expect an arms race in edge AI compute over the next 12–24 months.

Frequently Asked Questions (FAQ)

Q1: Is Akamai now a direct competitor to AWS?

A: Not entirely. For general‑purpose compute (running a database, hosting a website), hyperscalers remain far larger. But for AI inference, Akamai is a credible challenger – and for latency‑sensitive workloads, it may be superior.

Q2: How much will Akamai’s cloud revenue grow after this deal?

The 1.8 billion contract is spread over seven years (257 million per year). In Q1 2026, Akamai’s cloud revenue was $95 million. This deal alone will more than double its cloud run rate.

Q3: What about Cloudflare – are they doing the same thing?

Yes. Cloudflare’s Workers AI also runs models on its global network. But Akamai has a much larger edge footprint (4,300 locations vs. Cloudflare’s ~300). Akamai’s head start in dedicated GPU deployment (4,400+ GPUs) is also significant.

Q4: Does this mean AI inference costs will drop?

Potentially. Edge inference can be cheaper because it avoids long‑distance data transfers and uses smaller, more efficient models. However, demand is growing so fast that prices may not fall dramatically in the near term.

Q5: Why didn’t Anthropic just use AWS or Azure for inference?

They do – Anthropic uses multiple providers. But hyperscalers’ inference nodes are still centralized in a handful of regions. For users in, say, Southeast Asia or South America, latency to a Virginia data center is too high. Akamai has edge nodes in Jakarta, Manila, São Paulo, and many other cities.

Q6: What types of AI models run best at the edge?

Small‑to‑medium models optimized for low latency: voice recognition, image classification, translation, summarization, and agentic decision‑making. Very large foundation models (GPT‑5, Gemini Ultra) may still require centralized data centers for full performance.

Q7: Will Akamai sell this capacity to other AI labs?

Almost certainly. Akamai has built a platform, not a private tunnel for Anthropic. Other AI startups and enterprises can already sign up for Akamai Cloud Inference.

Q8: How does this connect to ExplainThisTech’s earlier articles?

Directly. The AI infrastructure constraints we covered (power, chips, labor) are why Anthropic needs multiple suppliers. The inference cost explosion we described is why edge inference matters. And the Texas data center boom shows where training happens – but inference is increasingly global.

Conclusion – The CDN Giant That Became an AI Cloud Powerhouse

Akamai’s transformation from a legacy CDN company to a billion‑dollar AI cloud provider is one of the most remarkable pivots in tech history. By acquiring Linode, building a distributed cloud, and betting on edge inference, Akamai positioned itself perfectly for the moment when AI workloads shifted from training to serving.

The $1.8 billion Anthropic deal is validation – not just of Akamai, but of the entire neocloud movement. It proves that hyperscalers (AWS, Azure, GCP) are not the only game in town. For latency‑sensitive, inference‑heavy workloads, a distributed edge cloud can beat the giants at their own game.

As Tom Leighton said, the future of AI is not a few isolated factories – it is a unified distributed grid spanning the globe. Akamai has built that grid. And Anthropic just bought a front‑row seat.

References & Further Reading

- Bloomberg News – “Anthropic Signs $1.8 Billion AI Cloud Deal with Akamai” (May 8, 2026)

- Akamai Investor Relations – Q1 2026 Earnings Call (April 2026)

- Akamai Official Blog – “Announcing Akamai Cloud Inference” (March 2025)

- Reuters – “Akamai’s $900M Linode Acquisition Closes” (2022)

- Deloitte – “AI Inference Spending Overtakes Training in 2026” (January 2026)

- The Information – “Neoclouds Challenge AWS in AI Race” (April 2026)

If you found this explainer useful, check out our related articles:

👉 Why AI & Cloud Infrastructure Demand Is Outpacing Supply (5 Constraints)

👉 Why Google, Microsoft, and Amazon Are Building Their Own AI Chips (6 Reasons)

👉 DeepSeek Explained: The Chinese AI Model That’s Beating GPT‑4 on Cost

📬 Subscribe to ExplainThisTech for more “why” breakdowns of the technology shaping our world.

Leave a Reply