Last updated: May 15, 2026 | Reading time: 12 minutes

Introduction – The Invisible Weight of a Single Prompt

Every time you ask ChatGPT a question, generate a Midjourney image, or let Perplexity’s agent browse for you, something physical happens in the real world. A chip draws power. A data center’s cooling system spins faster. A transformer hums at a slightly higher load. A water pipe delivers gallons to a liquid‑cooling loop.

We think of AI as ethereal — a “cloud” of intelligence that lives in servers somewhere distant. But the cloud is made of steel, copper, concrete, and water. And the infrastructure it runs on is straining under the weight of our collective curiosity.

In 2026, estimates suggest global AI data‑center spending will exceed $750 billion according to Gartner and Bloomberg figures. Power‑hungry AI systems are pushing electricity grids to their breaking point, consuming water that could sustain cities, and creating supply chain crises for equipment as basic as electrical transformers.

This article exposes the physical reality of AI — why your digital interactions have very real analog consequences, and why the most pressing constraint on AI progress is no longer silicon, but the planet’s capacity to power and cool it.

Quick Summary – The Physical Toll of AI

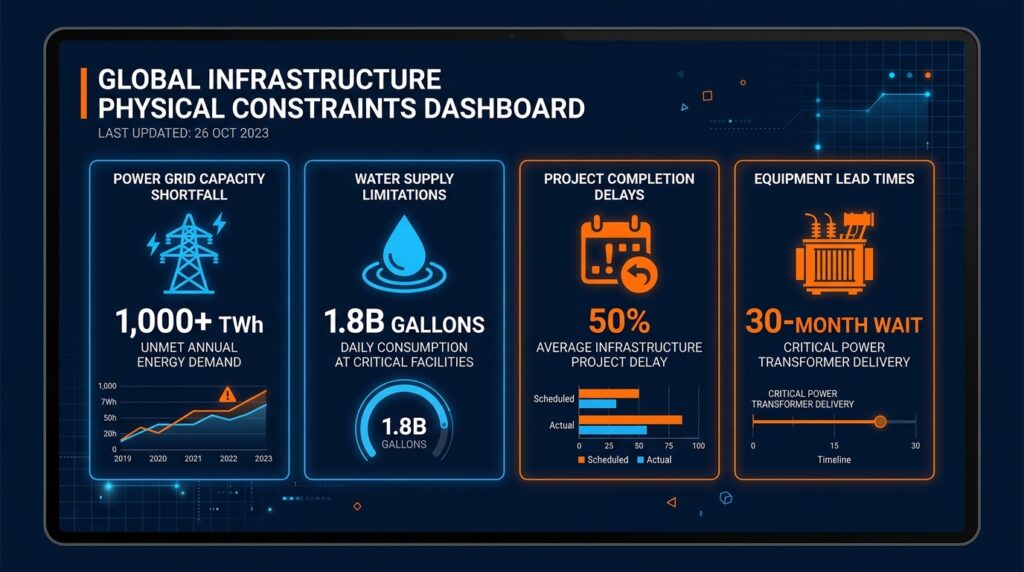

| Metric | Scale | Interpretation |

|---|---|---|

| Global data‑center electricity (2026) | ~1,000 terawatt‑hours (TWh) | Equivalent to Japan’s entire annual consumption |

| AI‑dedicated electricity (2030) | ~465 TWh | Triples from 2025 levels, half of all data‑center power |

| US data‑center share of grid | Over 4% by 2026; 12% by end of decade (McKinsey) | Every 10th watt consumed could power an AI query |

| Single large data center water use | Up to 1.8 billion gallons annually | Roughly equal to the drinking water needs of a mid‑sized city |

| US projects facing power delays | Nearly half of planned 2026 capacity | 12 GW expected, but only one‑third actively under construction |

| Transformer lead times | From 12 months (2020) to 24–30 months (2026) | A key source of infrastructure gridlock |

1. The Power Problem: AI Is an Electricity Juggernaut

The primary reason your ChatGPT session costs more than a Google search is simple physics: Generating language consumes far more electricity than indexing a web page.

The International Energy Agency (IEA) quantifies the scale. In 2025 alone, electricity consumption from AI‑focused data centers surged 50% year‑over‑year. Global data‑center electricity demand grew 17% in 2025 — well above overall global electricity demand growth of just 3%. Goldman Sachs Research forecasts that global power demand from data centers will increase 50% by 2027 and as much as 165% by the end of the decade.

How We Got Here

| Year | Event | Power Implication |

|---|---|---|

| 2022 | ChatGPT launches | A new class of energy‑intensive inference workloads begins |

| 2023–2024 | Search engines add AI overviews | Millions of extra queries daily |

| 2025 | AI agents (Perplexity, Operator, Claude) go mainstream | Autonomous, multi‑step operations multiply power usage |

| 2026 | A single large‑scale training run (GPT‑5 class) | Estimated 40–50 MW for months at a time |

The Stanford AI Index 2026 found that AI data centers’ total power capacity has climbed to 29.6 gigawatts — roughly equivalent to New York State at peak electricity demand. All AI systems combined now consume electricity comparable to the entire country of Switzerland or Austria.

2. The Grid Can’t Keep Up – Transformer Shortages and Multi‑Year Delays

Even if enough electricity existed on paper, the physical grid cannot deliver it fast enough. The bottleneck today is not generation — it is power delivery hardware.

Bloomberg reports that US AI data‑center expansion faces severe shortages of transformers, switchgear, and batteries. Approximately 12 GW of data‑center capacity was projected for 2026, but only one‑third is currently under construction. Nearly half of planned US data‑center projects may be delayed or cancelled.

Why the shortage?

- Lead times for high‑power transformers have exploded from 12 months (2020) to 24–30 months in 2026.

- The US has become dependent on imports from China — which surged from fewer than 1,500 units in 2022 to over 8,000 in 2025 — creating discomfort amid technology decoupling.

- Hyperscale campuses face multi‑year setbacks due to slow permitting, lengthy grid interconnection queues, and labor bottlenecks.

A further challenge is the tension between project timelines. Data centers can be built in 18–24 months, but the power infrastructure they depend on — transmission lines, substations, transformers — takes 10–12 years. This mismatch makes systematic planning nearly impossible.

3. Water – AI’s Thirsty Secret

Data centers do not just consume electricity; they consume water — often enormous quantities of it — to cool the servers they run.

A single 300‑megawatt data center can consume nearly 1 billion gallons of water annually. For a large evaporatively‑cooled facility, water consumption can reach 1.8 billion gallons.

How does that compare? It is roughly the annual water consumption of a mid‑sized city of 600,000 to 1 million people.

Stanford AI Index 2026 Findings

The same Stanford report that documented power consumption also quantified water use:

- Annual GPT‑4o inference water use alone may exceed the drinking water needs of 1.2 million people.

- Projections suggest AI’s global water footprint could reach 4.2–6.6 billion cubic meters annually by 2027.

The Cooling Conundrum

Why does AI use so much water? Rack densities have jumped from 10–20 kilowatts (traditional air‑cooled servers) to hundreds of kilowatts or even megawatts in AI factories. This forces the adoption of liquid cooling, which in many configurations requires significant water — either for direct cooling or for heat rejection.

However, efficiency efforts are emerging. Johnson Controls launched zero‑water‑consumption reference designs for gigawatt‑scale AI data centers in early 2026, and zero‑water evaporative designs are gaining traction. But at the moment, the most advanced AI data centers are still net water consumers, often located in drought‑prone areas, creating tension with local communities.

4. HBM and Memory Supply – A Different Kind of Constraint

Power, transformers, and water are not the only bottlenecks. The chips inside the servers are constrained too — particularly high‑bandwidth memory (HBM), the specialized memory essential for AI accelerators.

SemiAnalysis estimates that by 2026, memory could account for roughly 30% of hyperscaler AI spending, up from only about 8% in 2023 and 2024, as HBM shortages ripple through the supply chain. Orders for Nvidia GPUs have grown to $1 trillion through 2027, double a year ago, with lead times stretching to nearly a year.

Why is memory suddenly a bottleneck? AI models expanded from hundreds of billions to trillions of parameters. Each parameter must be stored in memory during training and inference, requiring exponentially more HBM. Suppliers such as SK Hynix, Samsung, and Micron cannot scale production fast enough to meet demand — and building new fabrication capacity takes years.

5. The Physical Supercycle – From Silicon to Steel

Collectively, these constraints represent a fundamental shift in the nature of the AI industry. The bottleneck has moved down the stack: the primary constraints are no longer just the availability of advanced chips, but the physical capacity of the electrical grid, the supply of specialized cooling systems, and the raw materials needed to build the massive “AI factories” of the future.

The $1 Trillion Physical Supercycle

Investors and analysts have dubbed this phenomenon the “$1 trillion AI physical supercycle.” Capital that once flowed exclusively into chip design is now being redirected into:

- Grid‑scale power infrastructure (transformers, substations, transmission lines)

- Water treatment and recycling plants

- Advanced liquid‑cooling systems

- Specialized construction labor in remote locations

- Off‑grid power generation (natural gas, solar, small modular reactors)

By 2028, off‑grid generation for data centers could account for a substantial uptick in natural gas usage not captured in official power sector statistics. The US Federal Energy Regulatory Commission (FERC) has indicated it will advance rule changes by mid‑2026 to address how large loads connect to the grid, signaling that power constraints are moving from an opaque industry issue to a public infrastructure policy debate.

AI in Energy Systems: A Double‑Edged Sword

There is a twist: AI might also help solve the very problems it creates. Deloitte estimates that applying AI to energy systems could generate substantial energy savings, potentially equivalent to the annual usage of a country of 290 million people like Indonesia. The company’s AI for Energy Systems report suggests AI has the potential to deliver 3,700 terawatt‑hours of energy savings globally.

But these efficiency gains are not guaranteed and will not materialize overnight. Meanwhile, the infrastructure deficit is immediate.

Frequently Asked Questions (FAQ)

Q1: How much electricity does a single ChatGPT query use?

A: Estimates vary, but a single ChatGPT query uses roughly 10–20 times more electricity than a standard Google search, depending on model size and complexity. Multiply that by billions of queries per day, and the power demand becomes enormous.

Q2: Why can’t we just build more power plants?

A: Building generation capacity is only part of the answer. The real bottleneck is grid infrastructure — transformers, switchgear, transmission lines, and the skilled labor to install them. These take 3–8 years to bring online, far slower than AI model development.

Q3: Which uses more water: training or inference?

A: Inference is the bigger long‑term consumer because it runs continuously. A model like GPT‑4o is used by hundreds of millions of people, each query triggering the cooling system. Training is a massive one‑time spike; inference is a steady, persistent river.

Q4: Is there a solution to these infrastructure constraints?

A: In the longer term, yes. Efficiency improvements (better chip designs, more efficient cooling, compact transformers) and new capacity (more fabs, more grid investment) will ease constraints. But the lag between design and deployment is measured in years, not months.

Q5: How does this affect the cost of using AI services?

A: As power, infrastructure, and water costs rise, AI providers will face pressure to raise prices. Some may shift to smaller, more efficient models for routine tasks while reserving large models for premium use cases.

Q6: How does this connect to your article “Why AI & Cloud Infrastructure Demand Is Outpacing Supply”?

A: That article laid out the five constraints — power, chips, construction, labor, water. This article shows how those constraints are playing out in real time, with concrete numbers (1,000 TWh, 12 GW, 30% memory share, 1.8 billion gallons). Consider this a data‑driven sequel.

Q7: What happens if we run out of transformer capacity?

A: Without enough transformers, new data centers cannot connect to the grid, no matter how many GPUs exist. Some projects are exploring direct off‑grid generation (natural gas, solar with battery storage) to bypass the public grid entirely.

Q8: Can I, as an individual user, reduce my AI energy footprint?

A: Yes. Use smaller models when possible (e.g., Llama 3 8B instead of GPT‑4o for simple tasks). Avoid unnecessarily long prompts. Batch your queries. Turn off agentic features when you don’t need them — each autonomous agent action adds incremental load.

Conclusion – The Cloud Has a Weight

The term “cloud” is a deceptive metaphor. AI lives in physical data centers — buildings full of chips, copper, steel, and water — connected to power grids that are creaking under the strain of our collective curiosity.

The digital is real. Your ChatGPT queries consume electricity. Your Midjourney generations require water. Your Perplexity agent’s browsing depends on a transformer that took 30 months to arrive.

This is not a reason to stop using AI. It is a reason to understand the physical infrastructure that supports it — and to demand that companies and policymakers invest in the grid, the supply chain, and the water systems that will carry us into the AI era.

Because the cloud is not weightless. And pretending otherwise is the most dangerous illusion of all.

References & Further Reading

- BloombergNEF – “AI, Grids, and Electric Vehicles: Dispatch from the BNEF Summit San Francisco 2026”

- IFA Magazine – “AI’s power problem: the climate risk and opportunity investors can’t ignore”

- Goldman Sachs – Global data center power demand forecast

- International Energy Agency – “Key Questions on Energy and AI” (2026)

- Bloomberg / Digital Watch – “Power hardware shortages are delaying AI data centre expansion”

- Data Center Knowledge – “After the Power Crunch, AI Infrastructure Hits a GPU Wall”

- J.P. Morgan – “Is AI running out of compute?”

- Stanford HAI – 2026 AI Index Report

- Deloitte – “Gen AI to double global data centres’ electricity consumption by 2030”

- European Business Magazine – “Data Centre Power Crisis Is Choking the AI Revolution”

- ScienceDirect – “The water footprint of artificial intelligence”

If you found this explainer useful, check out our related articles:

👉 Why AI & Cloud Infrastructure Demand Is Outpacing Supply (5 Constraints)

👉 Why AI Agents Are Getting Their Own Wallets: The Rise of the Agentic Economy

👉 Why Nvidia Is Betting on Quantum AI: The Nvidia Ising Open‑Source Gambit

👉 Shadow AI: Why Employees Are Secretly Using ChatGPT (And Why It’s Dangerous)

📬 Subscribe to ExplainThisTech for more “why” breakdowns of the technology shaping our world.

Leave a Reply