For two years, the AI industry’s biggest headache was simple: not enough GPUs. Nvidia chips were backordered for months. Startups waited half a year for compute capacity. Hyperscalers scrambled to secure every available H100 and B200.

But in 2026, the bottleneck has shifted. The crisis now isn’t silicon — it’s memory. Specifically, High-Bandwidth Memory (HBM). This specialized, ultra-fast memory has quietly become the hardest component to source in the entire AI supply chain. Every major supplier — SK Hynix, Micron, Samsung — has sold out its entire 2026 HBM capacity months in advance.

That is why SK Hynix, a company worth less than 100 billion just 16 months ago, is now on the cusp of a 1 trillion market valuation. It is why a new memory-focused ETF surged 90% in its first month. And it is why analysts now call memory chips AI’s “biggest bottleneck”—a shift that is reshaping the economics of artificial intelligence.

This article explains why HBM memory is the new GPU. You will learn what HBM is, why AI cannot function without it, how a supply-demand gap of 50–60% emerged, and why the company that controls HBM now holds the keys to the entire AI industry.

Quick Summary – What You Need to Know

| Question | Answer |

|---|---|

| What is HBM? | High-Bandwidth Memory — a vertically stacked, ultra-fast memory chip that sits directly next to GPUs to feed them data at enormous speeds. |

| Why is it critical for AI? | AI models require massive bandwidth to move parameters between memory and compute. Without HBM, the fastest GPU is useless — it sits idle waiting for data. |

| What is the supply gap? | HBM supply-demand imbalance is estimated at 50–60% in 2026. Every major supplier is sold out through the entire year. |

| Who dominates HBM? | SK Hynix leads with approximately 54% of the global HBM4 market and over two‑thirds of Nvidia’s HBM orders for its Vera Rubin platform. |

| How has SK Hynix performed? | Shares rose 274% in 2025 and another 200%+ in 2026, pushing the company from under 100billiontonearly1 trillion in 16 months. |

| Why can’t supply expand quickly? | HBM production consumes 3–4x more wafer capacity than standard memory and requires complex 3D stacking. New fabs take 2–3 years to come online. |

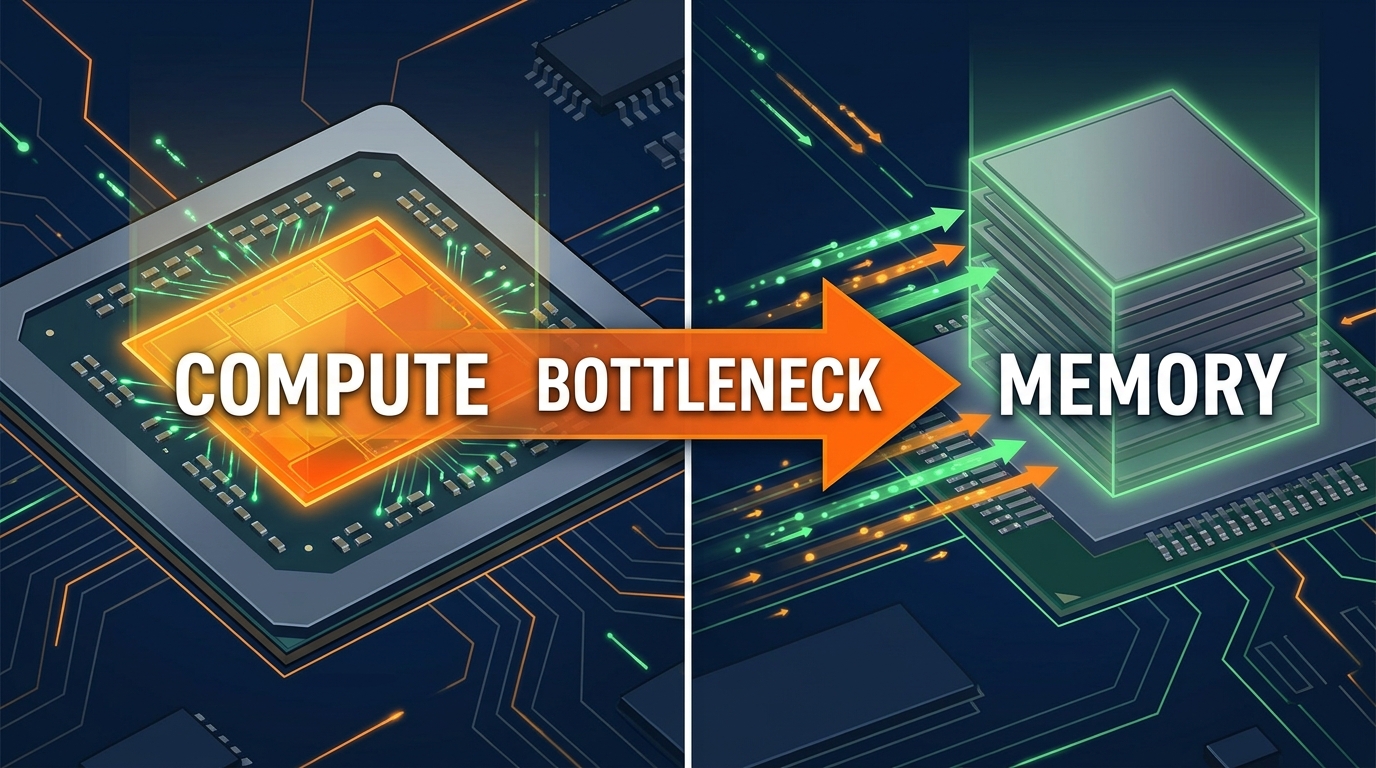

1. The Bottleneck Has Shifted: From Compute to Memory

For much of 2024 and 2025, the AI industry’s defining constraint was power. Hyperscalers had GPUs they could not deploy because electricity grids could not supply enough capacity. Microsoft CEO Satya Nadella famously described “having a bunch of chips sitting in inventory that I can’t plug in.”

That problem has not disappeared. Power remains a long-term constraint, with grid interconnection timelines stretching 3–8 years. But in 2026, a more immediate bottleneck has emerged further up the supply chain: memory.

The Center for a New American Security (CNAS) captured the shift in a May 2026 report: “The world’s leading AI companies cannot get enough chips.” Not enough GPUs — not enough of the memory and packaging that make them work. CNAS described AI chip production as a “binding constraint on the pace of the AI compute buildout” as hyperscalers prepare to spend $700 billion or more on capital expenditures in 2026.

The shift is visible everywhere. A new ETF tracking memory chipmakers (ticker: DRAM) surged 90% within weeks of its April 2026 debut, accumulating over $6.25 billion in assets. Its CEO, Dave Mazza, told CNBC: “Investors are waking up to the fact that the biggest bottleneck in the AI buildout is actually memory chips“.

2. What Is HBM? The Memory That Feeds the AI Beast

To understand why HBM is critical, you need to understand how AI models actually run. A large language model — GPT‑4, Claude, Gemini — consists of billions or trillions of parameters. Every time you ask a question, the GPU must rapidly move those parameters from memory to compute cores, perform calculations, and move the results back.

The math is unforgiving. NVIDIA’s H100 GPU uses 80GB of HBM3 with 3.35 terabytes per second of bandwidth. The H200 increased capacity to 141GB at 4.8 TB/s. The Blackwell B200 features 192GB of HBM3e achieving 8.0 TB/s — more than double the H100’s bandwidth. The upcoming Rubin R100 will pack 288GB of HBM4 with estimated bandwidth between 13‑15 TB/s — roughly five times the H100’s capacity in just three generations.

This progression reflects AI’s memory requirements scaling faster than Moore’s Law. A rough rule of thumb: each 1 billion parameters requires approximately 2GB of GPU memory in 16‑bit precision. Llama 3’s 70B variant needs around 140GB just to load — more than a single H100 can hold, forcing complex multi‑GPU setups.

Why HBM, Not Regular Memory?

Traditional GDDR memory (used in gaming GPUs) simply cannot keep up. HBM differs in three critical ways:

HBM achieves its enormous bandwidth through vertical stacking. Multiple DRAM dies are placed on top of each other, connected by thousands of tiny vertical wires called through‑silicon vias (TSVs). A single HBM3e stack delivers 1.2 TB/s of bandwidth, and AI accelerators typically use 4–8 stacks in parallel.

3. The Supply-Demand Gap: 50–60% and Growing

The numbers are staggering. SK Hynix’s CFO Kim Jae‑joon stated unequivocally: “We have already sold out our entire 2026 HBM supply”. Micron’s CEO Sanjay Mehrotra echoed the sentiment: “Our HBM capacity for calendar 2025 and 2026 is fully booked”.

The supply-demand imbalance is estimated at 50–60% for 2026. This means demand is roughly double what suppliers can produce.

Why Can’t They Just Build More?

Three structural realities explain why:

1. HBM consumes 3–4x more wafer capacity than standard DRAM. Each HBM chip requires 12–16 DRAM dies stacked together. That means one HBM chip uses the same fab capacity as 3–4 standard PC memory chips, with far more complex assembly.

2. Validation cycles are extremely long. Every HBM stack must be validated with each specific GPU design. NVIDIA, AMD, and custom chip designers spend months qualifying memory before it can be deployed at scale.

3. New fabs take years. A semiconductor fabrication facility requires 2–3 years from groundbreaking to production. The current expansion wave will not deliver meaningful new capacity until 2028–2029. SK Hynix’s Chairman Chey Tae‑won warned that memory chip shortages could persist until 2030.

The result is unprecedented pricing power. SK Hynix’s 12‑layer HBM3 pricing surged 260%, with two‑year cumulative increases exceeding 500%. SK Hynix’s inventory has dropped to just 4 weeks — far below the 8–12 week safety line.

4. How SK Hynix Became the HBM King

SK Hynix’s rise from sub‑100billiontonearly1 trillion in just 16 months is not luck. It is the result of a strategic bet on HBM years before the AI boom accelerated.

The Numbers Behind the Surge

| Metric | 2025 | 2026 (Projected) |

|---|---|---|

| Stock performance | +274% | +200%+ (already) |

| Market cap | <$100B | ~$948B (May 14) |

| Operating profit | ~$36B | ~$165–200B+ (460%+ YoY) |

| KOSPI index | +75% | +86% (best in world) |

South Korea’s benchmark KOSPI index has become the best‑performing major stock market in the world, up more than 86% in 2026 after a 75% surge in 2025.

The HBM4 Dominance

SK Hynix has locked in its lead for the next generation of memory. According to Counterpoint Research, SK Hynix will account for 54% of the global HBM4 market in 2026, followed by Samsung at 28% and Micron at 18%.

Even more telling: Nvidia has allocated approximately 70% of its HBM4 demand for the Vera Rubin platform to SK Hynix, up from earlier estimates of around 50%. This close partnership — SK Hynix has been supplying Nvidia with HBM4 samples for months — has made the Korean memory maker indispensable to Nvidia’s product roadmap.

UBS highlighted SK Hynix’s standing among Big Tech clients, noting that it will be the first HBM3E supplier for Google’s latest Tensor Processing Units (TPUs). The company maintains a dominant position with a 62% share of HBM shipments and strong margins exceeding 50%.

5. The Ripple Effect: What HBM Shortage Means for Everyone

For AI Developers and Enterprises

HBM scarcity means costs are rising. Memory manufacturers have raised HBM prices by high‑teens to low‑twenties percent in 2026 contracts. Those costs will flow through to API pricing, cloud instance rates, and ultimately to end users.

Expect to see:

- Higher inference costs for large models as memory becomes the new pricing lever.

- Longer wait times for GPU allocation as hyperscalers ration scarce HBM capacity.

- More aggressive model optimization — pruning, quantization, distillation — to reduce memory footprints.

For Consumers and Gamers

The HBM shortage has cascading effects. NVIDIA plans to slash RTX 50‑series GPU production by 30‑40% in H1 2026 because memory suppliers have shifted GDDR7 capacity to more profitable HBM production for data centers. Consumer graphics cards now compete with AI servers for the same limited memory resources.

For the Global Economy

If SK Hynix crosses the trillion‑dollar threshold, South Korea will become the first country outside the United States with two trillion‑dollar companies (joining Samsung, which crossed the milestone earlier in May 2026). The KOSPI’s near‑vertical climb — up more than 86% in 2026 — is almost entirely driven by these two memory giants.

This concentration creates vulnerability. As Finimize noted, record highs now depend on a small number of share prices. “Day‑to‑day moves in those two stocks can increasingly steer how ‘Korea’ performs in global portfolios”.

6. The Future: What Comes After HBM?

The memory supercycle is just beginning. Bank of America defines 2026 as a “supercycle similar to the boom of the 1990s,” forecasting global DRAM revenue to surge 51% and NAND by 45% year‑over‑year. BofA named SK Hynix as its global memory industry “Top Pick”.

Projected Market Size

| Metric | 2025 | 2026 | 2028 |

|---|---|---|---|

| Global semiconductor market | ~$780B | ~$975B (25% growth) | $1T+ |

| HBM market | ~$35B | ~$55B (58% growth) | $100B+ |

| SK Hynix operating profit | ~$36B | ~$165‑200B | Projected $250B+ |

The HBM market alone is expected to surpass 100 billion by 2028—up from 35 billion in 2025—a compound annual growth rate of approximately 40%. Some forecasts suggest that the HBM market in 2028 will exceed the entire DRAM market of 2024.

What’s Next in Memory Technology

The evolution is far from over. The industry is already moving toward HBM4 (entering production in 2026, with 16‑Hi stacks targeting Q4 2026) and HBM4E beyond. Each generation pushes bandwidth higher and power consumption lower — but also increases manufacturing complexity and lead times.

OpenAI has already signed long‑term agreements with Samsung and SK Hynix locking in HBM capacity through 2029. Nvidia’s Jensen Huang has taken an even more aggressive stance: “How much capacity — we’ll take it all”.

Frequently Asked Questions (FAQ)

Q1: Why is HBM memory so important for AI?

A: HBM acts as the ultra-fast, short‑term memory for AI chips. GPUs can compute incredibly quickly, but they cannot function without data being fed to them at equally high speeds. HBM provides the bandwidth AI models require. Without it, the most powerful GPU would sit idle waiting for data.

Q2: What is the current HBM supply‑demand gap?

A: Industry estimates place the HBM supply‑demand imbalance at 50–60% in 2026. All major suppliers — SK Hynix, Micron, Samsung — have sold out their entire 2026 production capacity months in advance. New orders are currently being scheduled for 2027 Q1 or later.

Q3: Can Samsung catch up to SK Hynix in HBM?

A: Possibly, but not immediately. Samsung is expected to capture approximately 28% of the HBM4 market in 2026. It has recently passed quality validation for Nvidia and AMD HBM4 products and will begin official supply in the coming months. Samsung is projected to lead in Vera Rubin‑specific HBM4 allocation, but SK Hynix is expected to maintain an overall volume lead.

Q4: How does this affect the cost of using AI?

A: Memory now accounts for roughly 30% of hyperscaler AI spending, up from only 8% in 2023 and 2024. As HBM prices rise, expect higher inference costs, increased cloud instance rates, and continued upward pressure on API pricing.

Q5: Will the HBM shortage affect consumer GPUs?

A: Yes. Memory suppliers have shifted capacity away from GDDR (used in gaming GPUs) to more profitable HBM production. NVIDIA has already cut RTX 50‑series production by 30‑40% in early 2026 due to these constraints.

Q6: When will the HBM shortage end?

A: Not soon. SK Hynix’s Chairman Chey Tae‑won warned that memory chip shortages could persist until 2030. New fabrication facilities under construction today will not deliver meaningful additional capacity until 2028–2029. The supply-demand imbalance is structural, not temporary.

Q7: How does this connect to your previous articles on AI infrastructure constraints?

A: This is the next chapter in AI’s infrastructure crisis. We previously covered power grid and transformer shortages. Now the bottleneck has moved further upstream into the semiconductor supply chain itself — specifically HBM memory. Each chapter reinforces the same message: AI runs on physical infrastructure, and every layer is under unprecedented strain.

Q8: What should companies do to prepare?

A: Secure long‑term memory contracts where possible. Optimize models to reduce memory footprint (quantization, pruning, distillation). Consider smaller or specialized models for inference tasks. And monitor HBM pricing trends as a leading indicator of AI compute costs.

Conclusion – Memory Is the New Compute

The AI industry spent 2024 and 2025 worrying about GPUs and power. In 2026, the bottleneck has shifted to something more fundamental: memory. The chips that feed data to AI accelerators have become the scarcest component in the entire supply chain.

SK Hynix’s trillion‑dollar valuation is not a bubble. It is a market signal that memory has become as strategic as compute. The company that bet early on HBM — that built the manufacturing capacity, secured the Nvidia relationship, and scaled production — now holds a choke point on the entire AI industry.

For the rest of us, the implications are clear. AI costs will continue to rise. Access will be rationed. And the physical reality of artificial intelligence — the chips, the memory, the power, the water — will become impossible to ignore.

The era of cheap, unlimited AI compute is over. Welcome to the age of memory scarcity.

Leave a Reply